NVIDIA ConnectX-6 InfiniBand Adapter MCX653105A-HDAT – 200Gb/s RDMA Hardware Encryption

Thông tin chi tiết sản phẩm:

| Hàng hiệu: | Mellanox |

| Số mô hình: | MCX653106A-HDAT-SP |

| Tài liệu: | connectx-6-infiniband.pdf |

Thanh toán:

| Số lượng đặt hàng tối thiểu: | 1 cái |

|---|---|

| Giá bán: | Negotiate |

| chi tiết đóng gói: | hộp bên ngoài |

| Thời gian giao hàng: | Dựa trên hàng tồn kho |

| Điều khoản thanh toán: | T/T |

| Khả năng cung cấp: | Cung cấp theo dự án/đợt |

|

Thông tin chi tiết |

|||

| tình trạng sản phẩm: | Cổ phần | Ứng dụng: | Máy chủ |

|---|---|---|---|

| Tình trạng: | Mới và nguyên bản | Kiểu: | Có dây |

| Tốc độ tối đa: | Lên đến 200 GB/s | Đầu nối Ethernet: | QSFP56 |

| Người mẫu: | MCX653106A-HDAT | Tên: | MCX653106A-HDAT-SP Thẻ mạng Mellanox 200gbe Két sắt thông minh tốc độ cao |

Mô tả sản phẩm

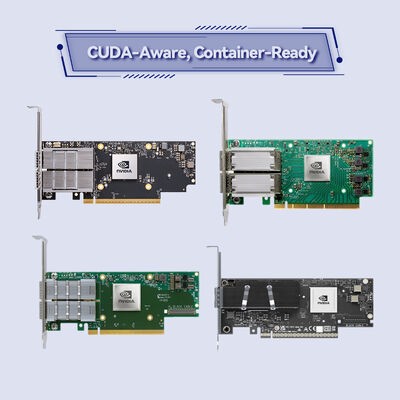

MCX653105A-HDAT – Single-Port 200Gb/s

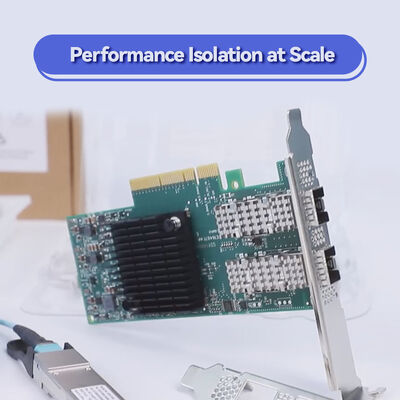

Engineered for demanding HPC, AI, and hyperscale cloud infrastructures, the NVIDIA® ConnectX®-6 MCX653105A-HDAT smart adapter card delivers 200Gb/s bandwidth on a single QSFP56 port with In-Network Computing acceleration. Offloading critical data movement and security tasks from the CPU, it dramatically improves efficiency, scalability, and security — from deep neural network training to real-time data analytics and NVMe-oF storage.

As a core component of the NVIDIA Quantum InfiniBand platform, ConnectX-6 enables end-to-end RDMA, hardware-based reliable transport, and advanced congestion control. The MCX653105A-HDAT single-port model offers a cost-effective yet powerful entry into 200Gb/s HDR InfiniBand and 200GbE networks. It integrates block-level XTS-AES encryption, NVMe over Fabrics (NVMe-oF) offloads, and GPUDirect RDMA acceleration — making it the ideal choice for GPU-accelerated clusters, software-defined storage, and high-frequency trading environments where low latency and security are paramount.

NVIDIA ConnectX-6 extends Remote Direct Memory Access (RDMA) beyond conventional limits. By implementing hardware offloads for MPI tag matching, out-of-order RDMA supporting Adaptive Routing, and Dynamically Connected Transport (DCT), it ensures efficient scaling across thousands of nodes. The adapter's In-Network Memory capability enables registration-free RDMA memory access, reducing software overhead. Combined with PCIe Gen 4.0, data moves directly between memory and network, freeing CPU cycles for application logic. Additionally, hardware-based reliable transport and end-to-end packet-level flow control guarantee data integrity even under extreme load.

With support for RoCE (RDMA over Converged Ethernet), overlay network tunneling offloads, and intelligent interrupt coalescence, ConnectX-6 provides a unified smart fabric for both InfiniBand and Ethernet environments.

- High Performance Computing (HPC): Large-scale simulations, weather modeling, computational chemistry requiring deterministic low latency.

- AI & Machine Learning: Accelerate distributed training of deep neural networks with GPUDirect RDMA and 200Gb/s throughput.

- NVMe-oF Storage Arrays: Build high-performance NVMe/TCP or NVMe/RDMA storage targets with hardware offloads, reducing CPU load by up to 30%.

- Hyperscale Cloud & NFV: Efficient service chaining, OVS offload (ASAP²), and SR-IOV for up to 1K virtual functions.

- Financial Services & Trading: Ultra-low latency market data distribution and algorithmic trading infrastructure.

ConnectX-6 MCX653105A-HDAT is compatible with a wide range of servers, switches, and OS environments. It supports InfiniBand switches up to 200Gb/s (HDR) and Ethernet switches up to 200Gb/s with auto-negotiation and all FEC modes. The adapter works across x86, Power, Arm, GPU, and FPGA-based platforms.

| Category | Supported Options / Standards |

|---|---|

| Operating Systems | RHEL, SLES, Ubuntu, other major Linux distributions, Windows Server, FreeBSD, VMware vSphere |

| InfiniBand Specification | IBTA 1.3 compliant, 200/100/50/25/10Gb/s, 8 virtual lanes + VL15, hardware-based congestion control |

| Ethernet Standards | 200/100/50/40/25/10/1GbE, IEEE 802.3bj, 802.3by, 802.3ba, PFC, ETS, DCB, 1588v2, Jumbo frame (9.6KB) |

| CPU Offloads & Virtualization | SR-IOV (up to 1K VFs), NPAR, DPDK, ASAP² OVS offload, tunnel encapsulation/decapsulation (VXLAN, NVGRE, Geneve) |

| Management & Boot | NC-SI, MCTP over SMBus/PCIe, PLDM (DSP0248/DSP0267), UEFI, PXE, iSCSI remote boot, InfiniBand remote boot |

| Parameter | Detail |

|---|---|

| Product Model | MCX653105A-HDAT |

| Form Factor | PCIe Stand-up, low-profile bracket included, tall bracket mounted, short bracket as accessory |

| Network Ports | 1x QSFP56 (single-port) |

| Supported Speeds (InfiniBand) | 200/100/50/25/10 Gb/s |

| Supported Speeds (Ethernet) | 200/100/50/40/25/10/1 Gb/s |

| Host Interface | PCIe Gen 3.0/4.0 x16 (also supports x8, x4, x2, x1) |

| Maximum Bandwidth | 200Gb/s |

| Message Rate | Up to 215 million messages per second |

| Latency | Extremely low (sub-microsecond RDMA) |

| Hardware Encryption | XTS-AES 256/512-bit block-level encryption, FIPS capable |

| Storage Offloads | NVMe-oF target/initiator, T10-DIF, SRP, iSER, NFS RDMA, SMB Direct |

| Virtualization | SR-IOV (up to 1K VFs), VMware NetQueue, QoS per VM |

| Remote Boot | InfiniBand, Ethernet, iSCSI, UEFI, PXE |

| Dimensions (without bracket) | 167.65mm x 68.90mm |

| RoHS & Compliance | RoHS compliant, ODCC compatible |

| Ordering Part Number (OPN) | Ports / Speed | Host Interface | Key Features |

|---|---|---|---|

| MCX653105A-HDAT | 1x QSFP56, up to 200Gb/s | PCIe 3.0/4.0 x16 | Single-port, crypto support, standard bracket |

| MCX653106A-HDAT | 2x QSFP56, up to 200Gb/s | PCIe 3.0/4.0 x16 | Dual-port, crypto support |

| MCX653105A-ECAT | 1x QSFP56, up to 100Gb/s | PCIe 3.0/4.0 x16 | 100Gb/s variant, no crypto |

| MCX653435A-HDAT (OCP 3.0) | 1x QSFP56, 200Gb/s | PCIe x16 | OCP 3.0 small form factor |

| MCX654105A-HCAT | 1x QSFP56, Socket Direct | 2x PCIe 3.0 x16 | Socket Direct with dedicated CPU access |

Note: For cold plate variants for liquid-cooled Intel Server System D50TNP, please contact Starsurge for customized ordering.

100% authentic NVIDIA ConnectX-6 adapters with full batch traceability.

Warehouse hubs in Asia, Europe, and Americas serving 50+ countries.

Firmware configuration, RDMA tuning, NVMe-oF validation, driver assistance.

Long-term partnership with NVIDIA distributors, volume discounts available.

Hassle-free replacement and advanced cross-ship for critical deployments.

English, Chinese, Japanese support; custom integration and labeling services.

Hong Kong Starsurge Group provides end-to-end support: from compatibility checks, firmware customization, to on-site deployment guidance. We offer dedicated technical account managers for data center upgrades and proof-of-concept (PoC) testing. All adapters are shipped with anti-static packaging and optional installation kits.

Support offerings include: 24/5 engineering support ticketing system, advanced replacement for business-critical environments, driver & software stack assistance (OFED, WinOF-2, DPDK), and remote troubleshooting.

- Confirm server mechanical clearance: standard height PCIe bracket included; low-profile bracket also provided as accessory.

- For liquid-cooled platforms (Intel D50TNP), verify cold plate option availability before ordering — standard air-cooled bracket is default.

- Please confirm driver compatibility with your Linux distribution version — NVIDIA MLNX_OFED recommended for optimal performance.

- Not publicly specified: Exact power consumption at full 200Gb/s load — refer to NVIDIA user manual or contact Starsurge for typical values (approx 13-16W).

- FIPS certification is hardware-capable but may require specific firmware version — notify sales team if FIPS compliance is mandatory for your deployment.

- Single-port adapter cannot be used for port redundancy; for high-availability designs, consider dual-port MCX653106A-HDAT.

Founded in 2008, Hong Kong Starsurge Group Co., Limited is a technology-driven provider of network hardware, IT services, and system integration solutions. With a global customer base spanning government, healthcare, manufacturing, education, finance, and enterprise sectors, Starsurge delivers high-performance networking equipment including switches, NICs, wireless solutions, and tailored software. The company combines experienced sales and technical teams to support complex infrastructure projects, IoT deployments, and network management systems. Customer-first approach, reliable quality, and responsive global delivery make Starsurge a trusted partner for next-generation data centers.

Contact Starsurge Team →| Fact | Value |

|---|---|

| Maximum Throughput | 200Gb/s (single port) |

| On-chip Acceleration Engines | Tag matching, rendezvous offload, collective offloads, burst buffer offload |

| Maximum Virtual Functions | Up to 1024 VFs per adapter |

| Encryption Standard | XTS-AES 256/512-bit, hardware offloaded from CPU |

| Adaptive Routing Support | Out-of-order RDMA with Adaptive Routing |

| In-Network Memory | Registration-free RDMA memory access |

| Server / Platform | CPU Architecture | Tested OS / Environment |

|---|---|---|

| Dell PowerEdge R750/R760 | Intel Xeon Scalable Gen 3/4 | RHEL 8.6+, Ubuntu 22.04, VMware ESXi 7.0/8.0 |

| HPE ProLiant DL380 Gen10/Gen11 | Intel Xeon | SLES 15 SP4, Windows Server 2022 |

| Supermicro Ultra SuperServer | AMD EPYC 7002/7003 | Ubuntu 20.04/22.04, Rocky Linux 8 |

| Lenovo ThinkSystem SR650/SR655 | Intel / AMD EPYC | RHEL 9, Windows Server 2019 |

| NVIDIA DGX A100 / H100 | x86_64 / NVIDIA Arm | Ubuntu with MLNX_OFED, NVIDIA HPC SDK |

- ☑ PCIe slot availability: x16 mechanical (electrical x16/x8/x4 supported)

- ☑ Required port speed: 200Gb/s or lower; cable type (QSFP56 passive DAC or active optics)

- ☑ OS driver version: Check MLNX_OFED or WinOF-2 compatibility with your kernel

- ☑ Encryption requirement: Standard AES-XTS or FIPS mode (special firmware)

- ☑ Cooling and bracket: Standard air-cooled or cold plate option for liquid cooling

- ☑ Quantity and lead time: Stock confirmation with Starsurge sales team

- ☑ Intended application: Single-port sufficient, or need dual-port redundancy?

QM8700 / QM9700 series, 40 ports HDR 200Gb/s, fully managed

Optimized for Ethernet and RoCE, 200Gb/s with low power

InfiniBand/Ethernet with programmable data path and isolation

DAC, AOC, and active fiber cables for 200G QSFP56

- ▸ NVIDIA ConnectX-6 User Manual (Firmware & Configuration Guide)

- ▸ RDMA over Converged Ethernet (RoCE) Deployment Best Practices

- ▸ NVMe-oF with ConnectX-6: Performance Tuning Guide

- ▸ GPUDirect RDMA for AI Clusters – Technical Overview

- ▸ Block-level Encryption Setup for FIPS Environments